We’re all coding with agents now, but delivering high quality software at 10x velocity remains an open problem. Code review bots are an important start, but a lot of bugs are still landing in production. Even top products are accumulating a layer of low-grade brokenness.1 We need new ways to make products secure and high quality.

We built a new kind of bug scanner to solve this problem.

The hard part about building a bug scanner is that any meaningfully complicated codebase has many thousands of bugs, and the vast majority don’t matter. You want to reserve human attention (and your tokens) for the bugs that matter. So, one of the most important ways we benchmark ourselves is that we want the bugs we generate to be significantly more important than the typical finding from a code review bot.

How can we quantify importance?

“Important” is a codebase-relative term. A crash in OpenBSD is a headliner finding for a frontier model launch, but a crash in an experimental personal project might not be worth thinking about.

One way to evaluate ourselves is against review bots that operate against individual PRs in a repo. We find PR bots to be generally high value (we have 3 installed right now!) so they’re a useful baseline for signal-to-noise ratio.

To quantify our interestingness, we compared one week of review bot comments on OpenClaw and vLLM against bug reports from Detail. We chose OpenClaw and vLLM because the two are extremely successful products with wildly different development practices and two very different definitions of “importance”.

We also put Detail in “recent changes mode”, which limits Detail to the same week of changes that the review bots saw. This also means any bugs that Detail found were missed by code review and merged into the repo. I.e., code review bots get the first pass at the code.

We ran a tournament with Sonnet 4.6 as a judge between pairs of findings to decide which was more “important”. Then all the pairwise outcomes were fed into a Bradley-Terry model to produce a global ranking. You can think of this as similar to an ELO score for bug reports. The tournament code is here.

We gave Sonnet 4.6 this prompt:

You are comparing two bug findings from code review tools on the same codebase.

An engineer only has time to investigate one. Pick the one that is more important for them to see.

Finding A: {finding_a}

Finding B: {finding_b}

Use the select_winner tool to pick a or b.

After our first run, we realized that the depth of evidence in the Detail findings was strongly biasing the model in favor of Detail.2 So we took both the review bot comments and the Detail findings and put them through a summarization prompt:

Summarize this code review finding in one sentence.

{finding}

Once we had the importance ranks, we converted them into percentiles and plotted the distribution of findings. The higher the percentile, the more important the finding.

We find that Detail heavily skews towards the right, which means that the bugs are very high signal-to-noise compared to the baseline of a CR bot. This is in spite of review bots running on unfinished PRs and Detail running on merged code, which should be harder to find bugs in.

To put a number on the asymmetry, here’s how far each tool’s average bug sits from the average bug across the whole dataset. The numbers are standard errors relative to the combined dataset. Detail at +5σ reflects that a random Detail bug ranks more important than a random code review bot finding ~91% of the time.

Note that this eval only measures importance of the bugs, which is separate from correctness. If bugs from Detail seem important but are wrong, we won’t have a useful product. We separately measure the acceptance rate for our findings. In the last two months, 82.9% of Detail findings were marked as correct by humans or agents. 3

Show me an example!

Here is an example bug Detail found in PostHog/posthog:

Summary

- Context: The

get_sandbox_for_repositoryTemporal activity provisions sandbox environments for task runs, including fetching and applying user-defined environment variables fromSandboxEnvironmentrecords. - Bug: The "agent hardening" refactor (commit

48e7429e29) replacedctx.get_sandbox_environment()with a direct database query that bypasses the private environment access control check. - Actual vs. expected: Any team member can access another user's private sandbox environment secrets; private environments should only be accessible to their creator.

- Impact: A malicious team member can exfiltrate secrets stored in private sandbox environments (API keys, tokens) by creating a task run that references the victim's private environment ID.

Code with Bug

# get_sandbox_for_repository.py lines 112-125

sandbox_environment = None

if ctx.sandbox_environment_id:

sandbox_environment = SandboxEnvironment.objects.filter(

id=ctx.sandbox_environment_id, team=task.team

).first() # <-- BUG 🔴 No privacy check; private envs become accessible to any team member

if sandbox_environment and sandbox_environment.environment_variables:

skipped_keys: list[str] = []

added_keys = 0

for key, value in sandbox_environment.environment_variables.items():

if key in RESERVED_SANDBOX_ENVIRONMENT_VARIABLE_KEYS:

skipped_keys.append(key)

continue

environment_variables[key] = value # <-- BUG 🔴 Injects secrets from another user's private env

added_keys += 1Explanation

- Private sandbox environment access control is implemented in

TaskRun.get_sandbox_environment()(viactx.get_sandbox_environment()), which returnsNonewhenenv.privateand the environment creator differs fromtask.created_by. - The refactor replaced this helper with a direct

SandboxEnvironmentquery filtered only byidandteam, dropping theprivate/created_bycheck. - Because

get_sandbox_for_repositoryreads and injectssandbox_environment.environment_variables, this becomes an authorization bypass that exposes stored secrets to other team members. - The API layer also accepts

sandbox_environment_idwithout enforcing privacy, so an attacker can supply a victim’s private environment ID and have its secrets injected into the attacker’s sandbox.

Codebase Inconsistency

- Another activity still uses the privacy-aware helper:

while# create_sandbox_from_snapshot.py sandbox_env = ctx.get_sandbox_environment() # <-- Privacy check appliedget_sandbox_for_repository.pyperforms a direct query, indicating a regression/oversight rather than intended behavior. - Existing model test codifies intended privacy behavior:

self.assertIsNone(run.get_sandbox_environment()) # <-- Privacy enforced

Failing Test

Add this test to products/tasks/backend/tests/test_api.py:

def test_run_endpoint_rejects_private_environment_from_other_user(self):

"""

Private sandbox environments should NOT be usable by other team members.

This test proves the vulnerability exists at the API layer.

"""

# Create User A with a private sandbox environment

other_user = User.objects.create_user(email="victim@example.com", first_name="Victim", password="password")

self.organization.members.add(other_user)

private_env = SandboxEnvironment.objects.create(

team=self.team,

name="Victim's Private",

private=True,

created_by=other_user,

environment_variables={"SECRET_KEY": "secret_value"}

)

task = self.create_task()

# Current user (User B, same team) tries to run with User A's private environment

response = self.client.post(

f"/api/projects/@current/tasks/{task.id}/run/",

{"sandbox_environment_id": str(private_env.id)},

format="json",

)

# SHOULD return 403 Forbidden - user cannot use another's private environment

# ACTUALLY returns 200 OK - the vulnerability allows this

self.assertEqual(response.status_code, status.HTTP_403_FORBIDDEN)Expected result: 403 returned (privacy enforced) Actual result: 200 OK (privacy bypass)

Exploit Scenario

- User A creates a private sandbox environment with secrets in

environment_variables. - User B (same team) discovers

sandbox_environment_idfrom task runstate. - User B starts a run using the victim’s private

sandbox_environment_id. - The activity injects User A’s secret env vars into User B’s sandbox, allowing exfiltration from within the run.

Recommended Fix

Restore the privacy-aware lookup in the activity:

sandbox_environment = ctx.get_sandbox_environment()and only read/apply environment_variables from that result.

History

This bug was introduced in commit 48e7429e294. The "agent hardening" commit refactored environment variable handling by replacing ctx.get_sandbox_environment() (which delegated to the privacy-aware TaskRun.get_sandbox_environment() method) with a direct database query that only filters by team. The original code path included a check that denied access to private environments unless task.created_by_id == env.created_by_id; the new inline query silently dropped this check, allowing any team member to read secrets from private sandbox environments belonging to other users.

PostHog Tasks lets users run an agent inside of a sandbox. Users can attach env bundles known as “Sandbox Environments” to these tasks which have an optional “private” flag that gates access to the creator. That “private” flag is not respected, and if a teammate knows the UUID of a private Sandbox Environment they can start a task that references it.

This is probably a bug an engineer at PostHog would want to be alerted to. Detail provides a convincing failing test and the codebase context to bootstrap an agent for a fix (including a damning docstring - “Private sandboxes environments should NOT be usable by other team members”).

Despite multiple review bots (Greptile, Codex, Hex Security, Copilot) the bug was merged. The issue was waiting to be exploited by a sinister user.

Claude Code baseline

Daily driver coding agents (e.g. Claude Code, Codex, Amp, Cursor, Devin) are pretty fantastic. Can you get similar performance by just asking them to find the most important bugs in a codebase?

Attempt #1

We gave Claude Code this prompt:

Find the 3 most important bugs in this repo. Use subagents.

With this prompt, Claude Code just searched for existing issues (e.g. grep for FIXME/BUG/HACK, query GitHub Issues). The bugs looked severe, but all of them were already reported.

Attempt #2

We tried again and asked Claude Code not to report existing issues:

Find the 3 most important bugs in this repo. Use subagents. Analyze the code, don’t just search for existing bugs.

After this, Claude Code started producing new issues, but didn’t seem to find anything we deemed noteworthy (Claude Code even acknowledged “[it] cannot confidently produce 3 most important bugs from this pass” in the vLLM experiment).

The Claude Code sessions are here.

Can I try it?

Yes of course. Head to app.detail.dev to get a free scan and see what we find in your codebase.

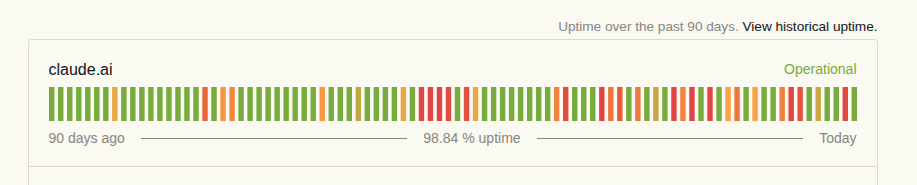

claude uptime, last 90 days

↩︎

↩︎This is downstream of a product form factor difference that gave Detail an unfair advantage in our first attempt at comparing bug reports: most people don’t want page-long code review comments, so code review bots don’t generate them, but engineers do prefer bug reports to be thorough, so the reports from Detail are more complete. ↩︎

Fix rate is another option, and we track that as well. This measurement includes other factors beyond intrinsic importance, e.g. easier bugs are more likely to be fixed. ↩︎